Woolly Walrus Games

– Usability Testing

This case study describes the execution of a usability test conducted during the development of Beat Blast. The goals of this usability test include establishing a baseline of player performance, establishing and validating player performance measures, and identifying potential design concerns to be addressed in order to improve the efficiency, productivity, and player satisfaction as well as detecting patterns in performance.

The objective is to perform a usability test and analysis of a game with a novel mechanic with no direct precedent

Summary

The following should be tested in order to address room for improvement in effectiveness, productivity and the satisfaction of the player.

1. Are features being used appropriately (dodge, note pickup, note placement, power ups)? (testing effectiveness)

2. How quickly are milestones reached(boss 1, boss 2)? (testing productivity)

3. Are there frustrating elements of the game? (testing satisfaction)

4. Are there enjoyable elements of the game? (testing satisfaction)

5. How strong is the player’s understanding of the technology? (testing effectiveness)

6. Are there patterns in one players methods of gameplay? (detecting patterns in performance)

7. Are there patterns in all players methods of gameplay? (detecting patterns in performance)

Upon review of this usability test plan, including the draft task scenarios and usability goals for Beat Blast, documented acceptance of the plan is expected.

Methodology

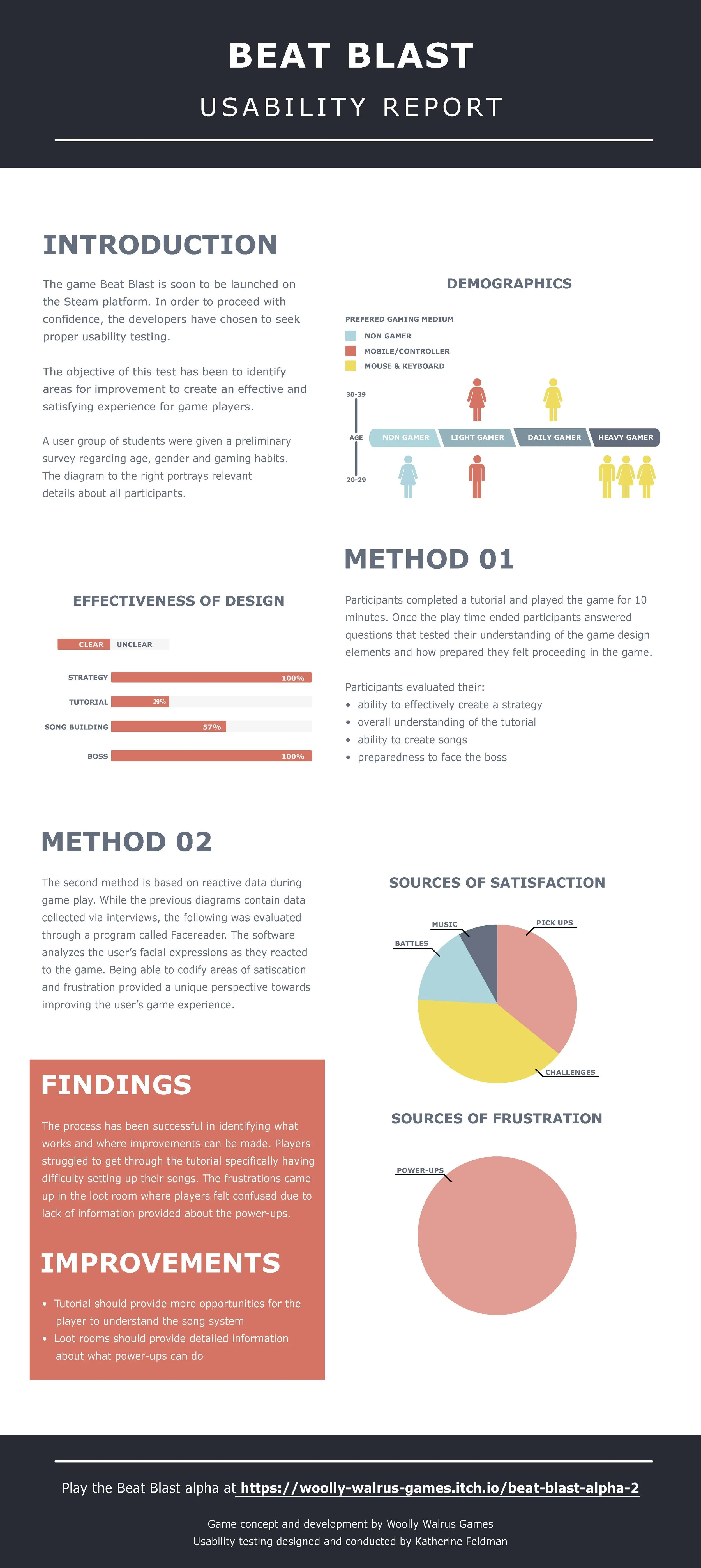

There were 7 participants in this usability study. The test was performed in a usability testing facility using video recordings, voice recordings and screen capture. The tests collected demographic and screening information (gender, age, if participants are considered ‘gamers’ or not, other hobbies, music preferences and how they identify themselves performing under stress) as well as information on the gameplay (frustration points in the game, understanding of the game mechanics, use of mechanics, if they would want to keep playing or play again at a later date).

Procedure

Participants took part in the usability test lab where a computer with a beta version of the game and supporting software was used in a typical office environment. The participant’s interaction with the game application was monitored by my self seated in the same office. The test sessions was videotaped and analyzed using Noldus Face Reader technology.

Participants signed an informed consent that acknowledges: the participation is voluntary, that participation can cease at any time, and that the session will be videotaped but their privacy of identification will be safeguarded. At this point as the facilitator, I asked each of the participants if they have any questions.

Participants completed a pre-test survey that gathered their demographic and background information. I explained that the amount of time taken to complete the test task will be measured and that exploratory behaviour outside the task flow should not occur until after task completion.

As the facilitator, I observed and entered player behaviour, player comments, and system actions in the data logging application. Once gameplay time ended, I asked questions about specific events that took place during play time. After all task scenarios are attempted, the participant completed the post-test interview designed to explore levels and moments of satisfaction.

The first 5 minutes of the time period required the participant to complete the demographic/screening survey. The 10 minutes following were allotted for game play tasks. The final 5 minutes of the testing were for the gameplay interview.

Test Observer

Provided overview of study to participants

Defined usability and purpose of usability testing to participants

Assisted in conduct of participant and observer debriefing sessions

Responded to participant's requests for assistance

Ethics

All persons involved with the usability test were required to adhere to the following ethical guidelines:

The performance of any test participant must not be individually attributable

Individual participant's name should not be used in reference outside the testing session

Participant’s identity information will not be shared with anyone outside of the testing facility

Usability Metrics

Usability metrics refers to player performance measured against specific performance goals necessary to satisfy usability requirements. Scenario completion success rates, adherence to dialog scripts, error rates, and subjective evaluations were used. Time-to-completion of scenarios were also collected.

Scenario Completion

Each scenario required that the participant interpret instructions based on the UI and performed the task. The scenario is completed when the participant indicates the scenario's goal has been obtained (whether successfully or unsuccessfully) or the participant requests and receives sufficient guidance as to warrant scoring the scenario as a critical error.

Critical Errors

Critical errors are assigned when the participant initiates (or attempts to initiate) an action that will result in the goal state becoming unobtainable. In general, critical errors are unresolved errors during the process of completing the task or errors that produce an incorrect outcome.

Non-Critical Errors

Non-critical errors are errors that are recovered from by the participant or, if not detected, do not result in processing problems or unexpected results. Although non-critical errors can be undetected by the participant, when they are detected, they are generally frustrating to the participant.

These errors may be procedural, in which the participant does not complete a scenario in the most optimal means (e.g., excessive steps and keystrokes). These errors may also be errors of confusion (ex., navigating the wrong direction, using a UI control incorrectly such as attempting to edit an un-editable field).

Non-critical errors can always be recovered from during the process of completing the scenario. Exploratory behaviour, such as opening the wrong menu while searching for a function, will be coded as a non-critical error.

Subjective Evaluations

Subjective evaluations regarding ease of use and satisfaction are collected via surveys, and during debriefing at the conclusion of the session. The surveys utilize free-form responses and rating scales.

Scenario Completion Time (time on task)

The time to complete each scenario, not including subjective evaluation durations, are recorded.

Usability Goals

The next section describes the usability goals for Beat Blast.

Completion Rate

Completion rate is the percentage of test participants who successfully complete the task without critical errors. A critical error is defined as an error that results in an incorrect or incomplete outcome. In other words, the completion rate represents the percentage of participants who, when they are finished with the specified task, have an "output" that is correct. Note: If a participant requires assistance in order to achieve a correct output then the task is scored as a critical error and the overall completion rate for the task is affected.

A completion rate of 100% is the goal for each task in this usability test.

Error-Free Rate

Error-free rate is the percentage of test participants who complete the task without any errors (critical or non-critical errors). A non-critical error is an error that would not have an impact on the final output of the task but would result in the task being completed less efficiently.

An error-free rate of 100% is the goal for each task in this usability test.

Time on Task (TOT)

The time to complete a scenario is referred to as "time on task". It is measured from the time the person begins the scenario to the time they signal completion.

Subjective Measures

Subjective opinions about specific tasks, time to perform each task, features, and functionality are surveyed. At the end of the test, participants rate their satisfaction with the overall system. Combined with the interview/debriefing session, the data is used to assess attitudes of the participants.

Problem Severity

To prioritize recommendations, a method of problem severity classification will be used in the analysis of the data collected during evaluation activities. The approach treats problem severity as a combination of two factors - the impact of the problem and the frequency of users experiencing the problem during the evaluation.

Impact

Impact is the ranking of the consequences of the problem by defining the level of impact that the problem has on successful task completion. There are three levels of impact:

High - prevents the player from completing the task (critical error)

Moderate - causes player difficulty but the task can be completed (non-critical error)

Low - minor problems that do not significantly affect the task completion (non-critical error)

Frequency

Frequency is the percentage of participants who experience the problem when working on a task.

High: 30% or more of the participants experience the problem

Moderate: 11% - 29% of participants experience the problem

Low: 10% or fewer of the participants experience the problem

Problem Severity Classification

The identified severity for each problem implies a general reward for resolving it, and a general risk for not addressing it, in the current release.

Severity 1 - High impact problems that often prevent a player from correctly completing a task. They occur in varying frequency and tend to result in loss of player loyalty. Reward for resolution is typically exhibited in fewer forum complaints and reduced redevelopment costs.

Severity 2 - Moderate to high frequency problems with moderate to low impact are typical of erroneous actions that the participant recognizes needs to be undone. Reward for resolution is typically exhibited in reduced time on task and more enjoyable player experiences.

Severity 3 - Either moderate problems with low frequency or low problems with moderate frequency; these are minor annoyance problems faced by a number of participants. Reward for resolution is typically exhibited in reduced time on task and more enjoyable player experiences.

Severity 4 - Low impact problems faced by few participants; there is low risk to not resolving these problems. Reward for resolution is typically exhibited in increased player satisfaction.

Reporting Results

The usability test report is provided at the conclusion of the analysis of the usability test. It consists of a report and/or a presentation of the results; evaluate the usability metrics against the pre-approved goals, subjective evaluations, and specific usability problems and recommendations for resolution.

Woolly Walrus Games Inc.

For further information: Kat Feldman

Primary Investigator: Kat Feldman

Tel: ***-***-**07

Email: *************@gmail.com

Wednesday, November 27th, 2019

Beat Blast

Consent Form

I, (please print)__________________________________________ have read and understood the information on the research project Beat Blast which is to be conducted by Kat Feldman and all questions have been answered to my satisfaction.

I agree to voluntarily participate in this research and give my consent freely. I understand that the project will be conducted in accordance with the Information Letter, a copy of which I have retained for my records.

I understand I can withdraw from the project at any time, without penalty, and do not have to give any reason for withdrawal.

Print Name: _____________________________________

Signature: _______________________________________

Date: ___________________________________________

This project has been approved by the George Brown College Research Ethics Board,

Approval No. 30662

Should you have any concerns about your rights as a participant in this research, or you have a complaint about the manner in which the research is conducted please get in touch with the Chair of the REB at ResearchEthics@georgebrown.ca.

It was important to recruit appropriate candidates for this usability test that vary across different demographic segments. This allows us to gain insights with the least amount of bias possible. Collecting personal information about candidates is always conducted with caution and consideration to get the best results and respect the participants.

Students have been selected from George Brown College to participate in this usability test. They range in age from the youngest available to the oldest available. Their game play experience varies as well as their gender.

After implementing the recommendations provided, the game saw the following results:

Tutorial completion rate increased from 8% to 96%

Signposting and text in the game was one of the games most popular updates